ADDRAR IV is an AI-powered catamaran that detects, navigates to, and collects floating debris — built by five engineering seniors at Texas A&M University Corpus Christi.

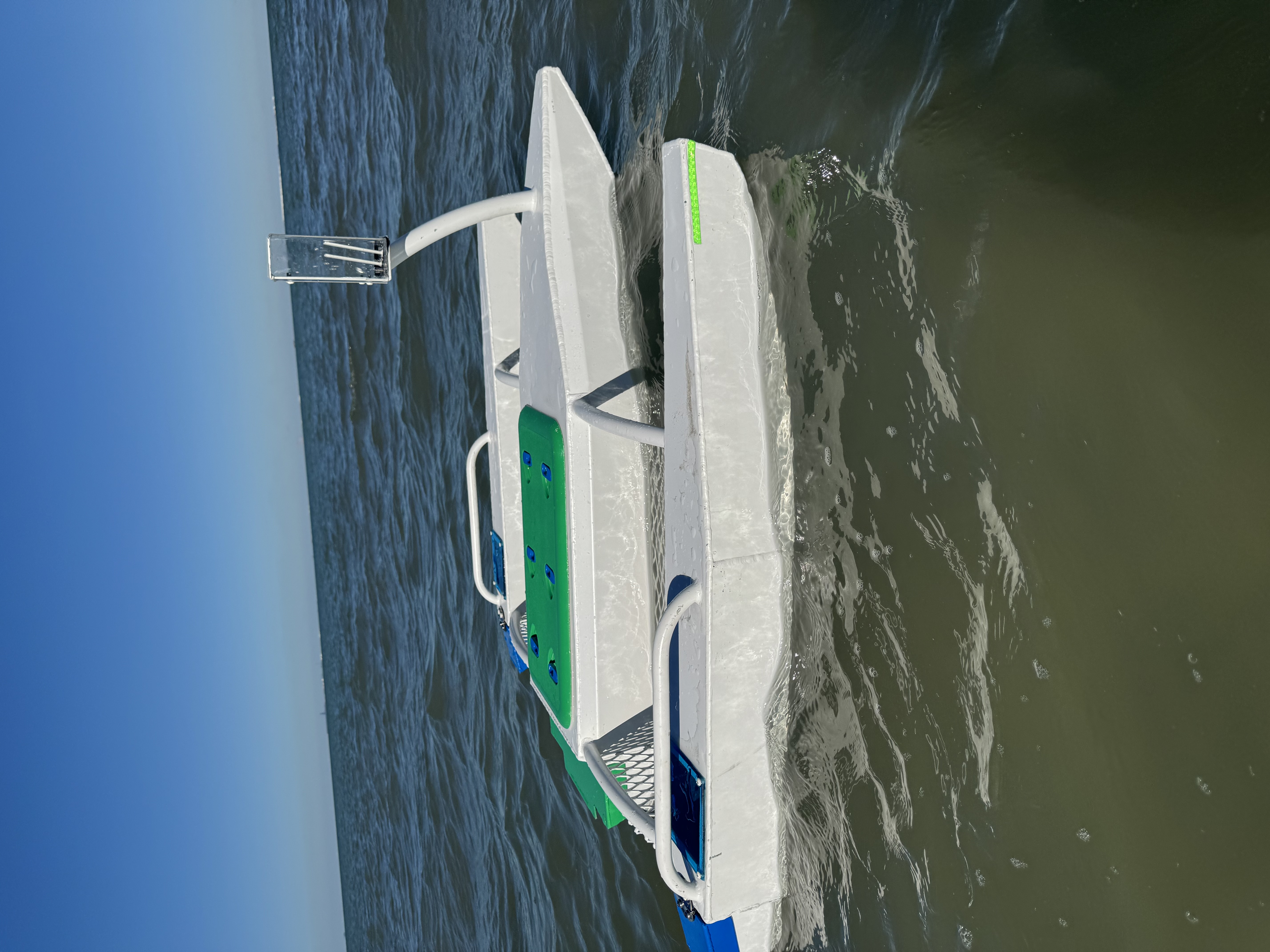

HERO PHOTO — Robot in water (16:9)

Built and supported at

Robot Photo

About the Project

ADDRAR IV is the latest iteration of an autonomous surface vehicle (ASV) built by the Engineering Senior Design team at Texas A&M University Corpus Christi. With 5.25 trillion pieces of plastic debris in the ocean and 269,000 tons floating on the surface, the team developed a sustainable solution for coastal communities.

The system integrates a YOLO11s vision model on a Raspberry Pi 5 with an ESP32 motor controller for real-time debris detection and collection — removing trash from the waterway without human intervention during the mission.

Core Capabilities

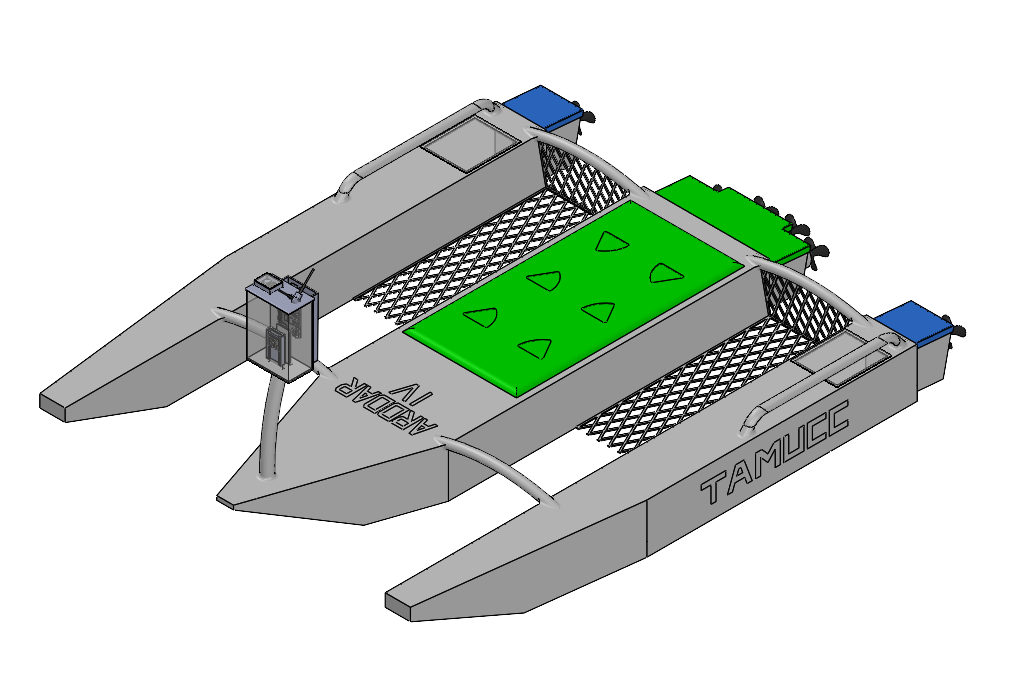

A purpose-built marine platform combining autonomous navigation, debris detection, and collection into a single deployable unit.

Powered by a Raspberry Pi 5 and Pi Camera 3 Wide, the onboard AI vision system identifies floating debris in real time with a wide-angle field of view — distinguishing trash from natural objects on the water surface.

Integrated mesh netting between the twin catamaran hulls captures floating debris as the vessel moves forward, retaining collected material without requiring manual retrieval mid-mission.

Six waterproof ring motors with propellers provide omnidirectional thrust, enabling precise maneuvering in tight spaces like marinas, harbors, and coastal inlets.

Seamlessly switch between full AI autonomy and manual remote control, allowing operators to intervene for targeted collection or take over in complex scenarios.

Twin-hull design provides excellent stability in varying water conditions while creating a natural channel for debris to flow into the collection mesh.

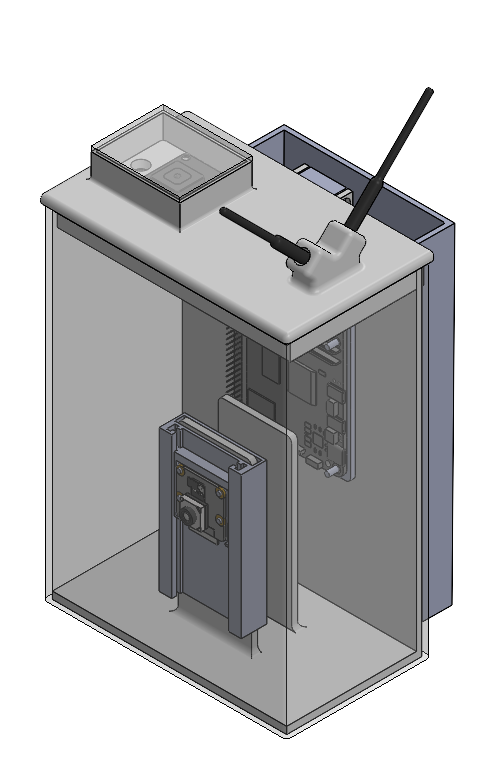

All electronics are housed in sealed enclosures with protected ESC controllers, ensuring reliable operation in marine conditions with splash and spray protection.

AI Vision System

A trained computer vision model running on a Raspberry Pi 5 processes live video from the Pi Camera 3 Wide — detecting floating debris and autonomously steering the vessel toward it for collection.

The Pi Camera 3 Wide captures a continuous video stream with a 120° field of view, scanning the water surface ahead and to both sides of the vessel.

Each frame is fed into the Raspberry Pi 5 where the trained AI model runs inference in real time, analyzing every frame for debris objects against the water background.

The model draws bounding boxes around detected debris, classifying objects by type (plastic, wood, foam, mixed waste) and assigning a confidence score.

Detected debris coordinates are passed to the navigation system, which steers all six propellers to intercept and collect the target using proportional control.

The detection model was trained on Google Colab using a custom Roboflow dataset of labeled aquatic debris images, then exported as ONNX and deployed to the Raspberry Pi 5.

IoT & Telemetry

ADDRAR streams real-time telemetry data from its onboard sensors via IoT, giving operators full visibility into the vessel's status, environment, and mission progress.

Battery health & voltage, debris collected, boat speed, and GPS location streamed live — updates every 2 seconds and accessible from any network.

Debris collected and boat speed displayed as live graphs on the dashboard. Full data export available as an Excel report for post-mission analysis.

Dashboard is securely exposed via Cloudflare Network Tunnel over T-Mobile 4G coverage, enabling remote access from outside the local network.

Stream real-time video from the Pi Camera 3 Wide directly to the dashboard — first-person view with live debris detection overlay.

The live dashboard runs on the robot's Raspberry Pi. When the robot is powered on and connected, operators can stream video, view telemetry, and send commands.

Environmental Intelligence

Every detected item is logged with GPS coordinates, debris class, and timestamp. The dashboard renders a time-evolving pollution heatmap — scrub the slider to watch debris accumulate across a mission, filter by debris type, and compare pollution patterns across missions and seasons.

NO DEBRIS DATA YET — COMPLETE A MISSION TO POPULATE

Predictive Intelligence

Using real-time wind and weather data from NOAA, combined with historical debris collection patterns, ADDRAR predicts where debris is most likely to concentrate — so missions can be planned proactively.

Loading weather data...

Impact & Applications

Autonomous debris removal creates measurable impact across environmental, municipal, and maritime domains.

Continuous autonomous deployment removes plastic and waste before it sinks, breaks down, or harms marine life — operating in areas that are difficult or dangerous for human crews.

City stormwater systems and urban waterways accumulate debris rapidly after rain events. ADDRAR provides a cost-effective automated cleanup alternative to manual crew dispatch.

Harbors and marinas require constant debris management to protect vessel hulls, propellers, and infrastructure. ADDRAR handles routine surface debris autonomously.

Competitive Landscape

Commercial autonomous cleanup vessels like the RanMarine WasteShark+ Pro retail for $40,000+. ADDRAR IV delivers comparable capability — plus features the commercial leader has not shipped — at under $2,000 in parts.

| Feature | WasteShark+ Pro | ADDRAR IV |

|---|---|---|

| Autonomous navigation | ✓ | ✓ |

| AI debris classification | Hardware only — not shipped | ✓ Fully implemented |

| Debris-type breakdown (live) | — | ✓ 4 classes |

| Pollution heatmap | Static | ✓ Time-evolving |

| Wildlife-aware routing | — | ✓ Planned |

| Live telemetry dashboard | ✓ | ✓ |

| Open data export | Excel | CSV / JSON / GeoJSON |

| Hot-swap battery | ✓ | ✓ (<5 min) |

| Unit cost | $40,000+ | < $2,000 BOM |

Test Results

Results from controlled pool trials and coastal water testing sessions conducted by the TAMUCC team.

Media & Results

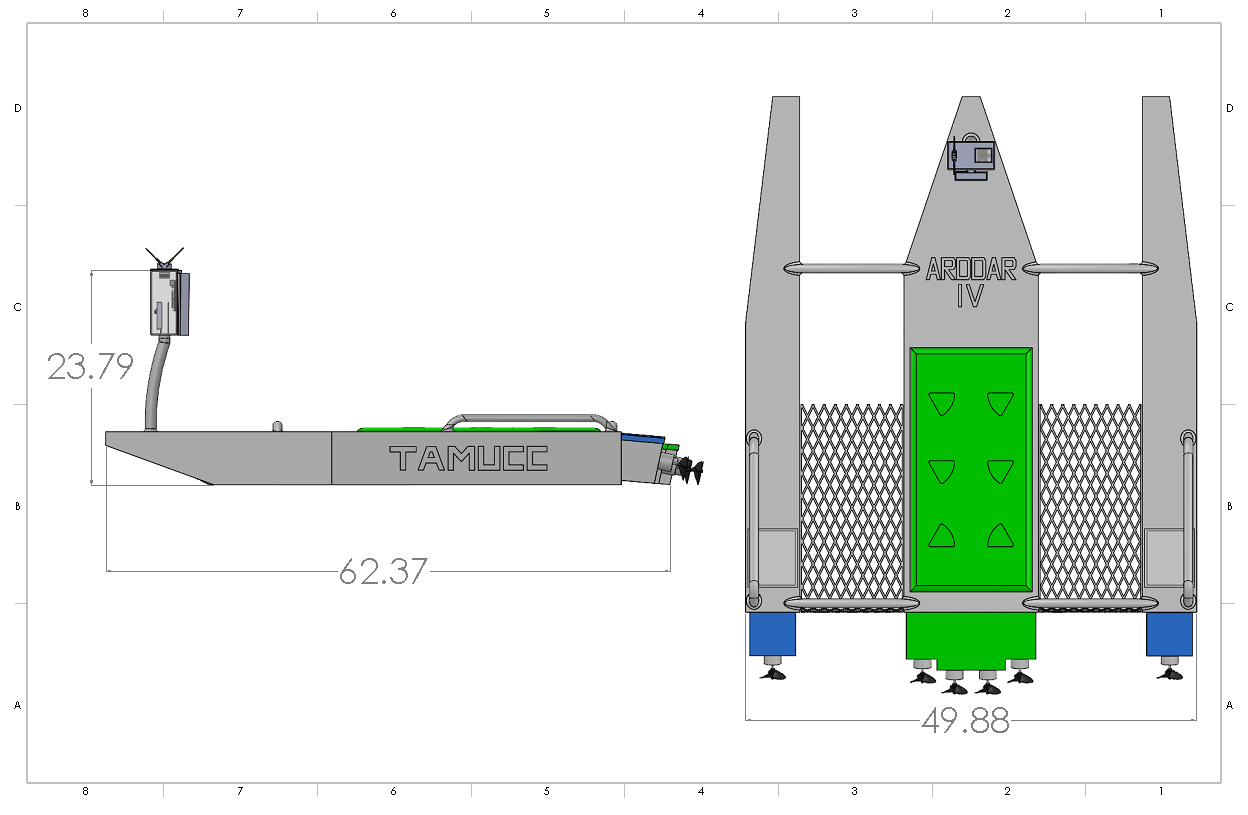

Photos, 3D design renders, engineering drawings, and test run results from the ADDRAR IV project.

3D Render — Exploded

Detection Accuracy Results

Technical Specifications

Full technical specs and bill of materials for ADDRAR IV.

| Platform | Catamaran ASV (twin-hull) |

| Compute | Raspberry Pi 5 (8 GB LPDDR4X, 128 GB microSD) |

| Camera | Pi Camera 3 Wide — 25×24 mm, 0.022 lbs |

| Propulsion | 6× HobbyStar 3660 Brushless, 1550 KV, 50A max |

| ESC | 120A, 6S compatible (×6) |

| Propeller | 3-blade aluminum, D=46 mm (1.81 in), P/D=1.4 |

| Motor Controller | ESP32 via iBus (Arduino Core) |

| Battery — Propulsion | 3× Turnigy 6S LiPo 22.2V 20Ah 12C (XT-90) |

| Battery — Electronics | 1× Turnigy 2S LiPo 2.2Ah 40C (XT-60) |

| Remote Control | FlySky FS-i6X, 2.4 GHz, 150 m range |

| GPS | Adafruit GPS Module |

| Communication | Cloudflare Tunnel / T-Mobile 4G |

| AI Model | YOLO11s / ONNX via Roboflow |

| Hull Material | Aluminum structure + 3D printed parts |

| Length | 61.85 in |

| Width | 49.88 in |

| Height | 23.75 in |

| Max Weight | <70 lbs |

| Debris Capacity | Up to 15 lbs |

| RC Range | 140 m perimeter |

| Min Runtime | ≥20 minutes |

| Est. Cost | ~$1,645 (electronics, motors, batteries) / $2,500 max |

| Component | Description | Qty |

|---|---|---|

| RPi 5 (8 GB) | Main compute board, 128 GB microSD | 1 |

| Pi Cam 3W | Wide-angle vision camera | 1 |

| HobbyStar 3660 | Brushless motor, 1550 KV, 50A max | 6 |

| ESC 120A | 6S electronic speed controller | 6 |

| Propeller | 3-blade aluminum, 46 mm, P/D=1.4 | 6 |

| 6S LiPo 20Ah | Turnigy propulsion battery (22.2V, 12C) | 3 |

| 2S LiPo 2.2Ah | Turnigy electronics battery (40C) | 1 |

| ESP32 | Motor controller via iBus + Arduino | 1 |

| FlySky FS-i6X | 2.4 GHz RC transmitter & receiver | 1 |

| Adafruit GPS | GPS positioning module | 1 |

| Voltage Divider | 22.2V → 3.3V for ESP32 battery read | 1 |

Engineering Lineage

ADDRAR is a multi-year TAMUCC research lineage. Each generation incorporates lessons learned from previous trials.

Manual-control proof of concept. Validated hull form and propulsion.

Added telemetry + GPS waypoint following. Introduced collection net.

First vision system. Basic object detection with fixed-rule heuristics.

Full YOLOv8 AI classification, 6-propeller omnidirectional drive, live IoT dashboard, and autonomous mission planning.

The Team

Senior design team at Texas A&M University Corpus Christi, College of Engineering & Computer Science.

Common Questions

Approximately 45 minutes of continuous autonomous operation on our current LiPo battery pack. Battery swap takes under 5 minutes.

The onboard YOLO model is trained on multiple classes including plastic bottles and bags, foam, and mixed waste. It was trained on thousands of labeled images captured in local coastal conditions.

Yes. All electronics are sealed in waterproof enclosures, and the hull and hardware are selected for saltwater compatibility.

The confidence threshold is tuned conservatively to minimize false positives. Operators can also intervene in real time via the dashboard — the vessel supports seamless switching between autonomous and manual control.

Under $2,000 in parts (full BOM on the Specs page). Commercial alternatives like the RanMarine WasteShark+ Pro retail for $40,000+.

Proportional control using GPS, IMU data, and vision-based detection. The system slows and pauses when obstacles are detected in its path. Wildlife avoidance is also being trained into the AI model.

Live System Access

Access the live control and monitoring interface. The dashboard runs on the robot's Raspberry Pi — only available when the robot is powered on and connected.

The robot streams live video, telemetry, and accepts remote commands via a Cloudflare Tunnel hosted on the onboard Raspberry Pi 5. When the robot is on, the dashboard is live at dashboard.addrarlivevideo.com.

If the Pi is powered off or disconnected, the dashboard will simply be unavailable — the info site remains online regardless.